This project was an industrial collaboration, with the goal of analysing potato crop yields using an on-harvester vision system to detect and size tubers as they pass along the conveyor. Prior to this work, the generally accepted technique was to manually sample a small area of the field and extrapolate findings; however tuber sizes can vary across a field and so the estimates, which are used for forecasting supply for retailers, tend to be very error prone.

The goal was to provide much more accurate yield estimates, in real-time, as the harvest is taken. The system used a low-cost depth sensor to segment objects of interest from the background and estimate their 3D shape. The system was trialled in-field and resulted in a patent application, and eventual productisation from the industrial partner.

Key Technologies

C++ | OpenCV | OpenNI | Hardware integration

How Did It Work?

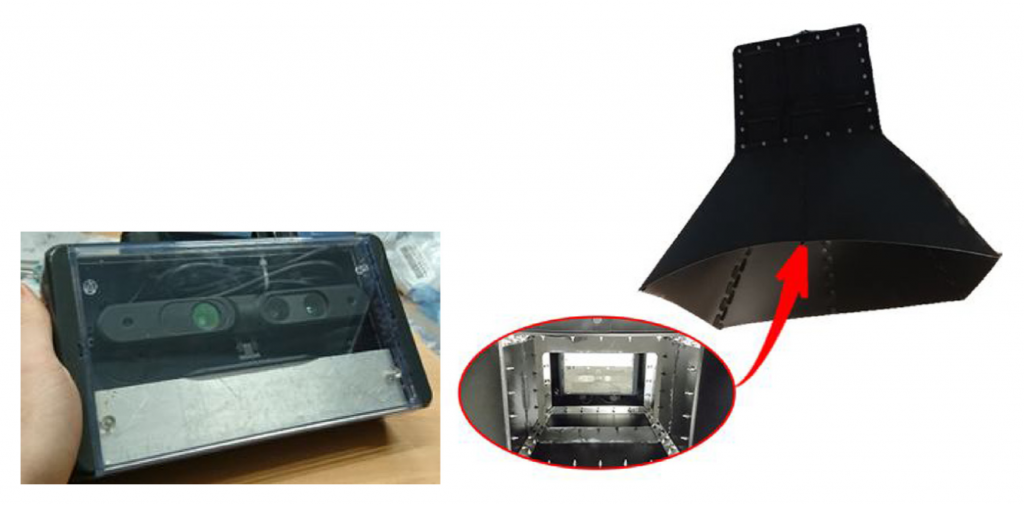

The system hardware comprised two key components, an imaging unit and the processing unit, both designed to be mounted directly on the harvester platform, and powered from the tractor’s 12V power supply. The imaging unit provides depth maps of the harvester conveyor belt, which are used to segment and size tubers according to their segmented measurements.

The Imaging Unit

Imaging was performed using an ASUS Xtion Pro structured light depth sensor, streamed to the processing unit. Since the system was designed to work outdoors, natural light interference was a real problem in the original prototype. The Xtion images at 850nm, a wavelength which is prevalent in natural sunlight. Therefore we developed a bespoke, rugged shroud and mounting mechansim to reduce interference and allow manipulation of the camera position. The camera itself was mounted in an IP68 housing, which was empirically shown not to affect the quality of imaging:

The Processing Unit

The processing unit consisted of an IP68 rated protected casing, inside which was mounted an Intel NUC, a USB hub and an OpenUPS uninterruptible power supply, which was used to stabilise the voltage from the tractor feed, and to allow the system to safely shut-down when the tractor was turned off (using custom system notifications).

User feedback was provided using an Arduino. We eventually chose a single LED to indicate detection of a tuber, but during development, we experimented with a number of indicator arrangements.

The Algorithm

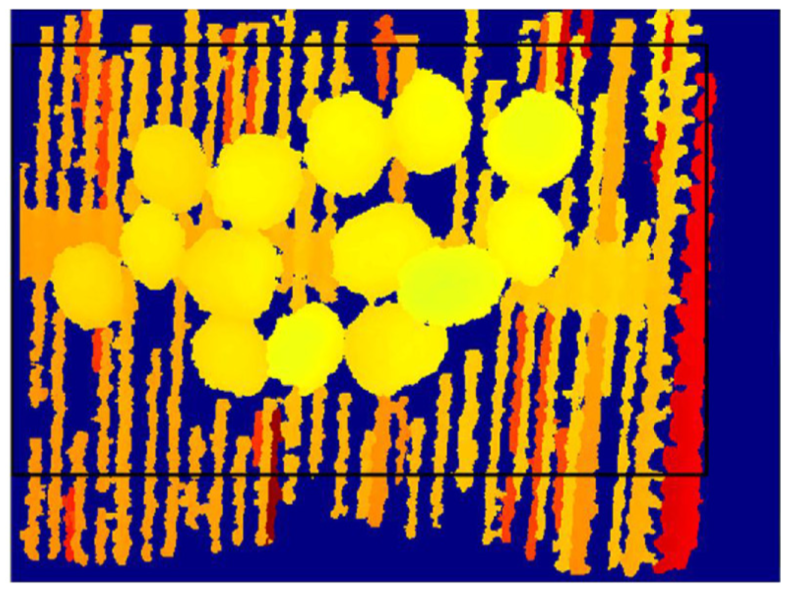

The metrology algorithm is relatively simple. We isolate an ROI during precalibration to ensure that artefacts from the shroud do not affect image quality.

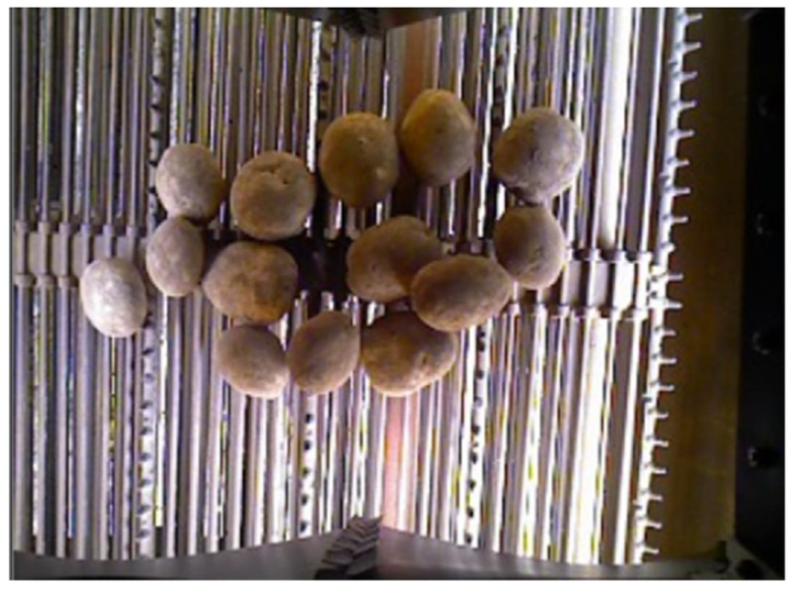

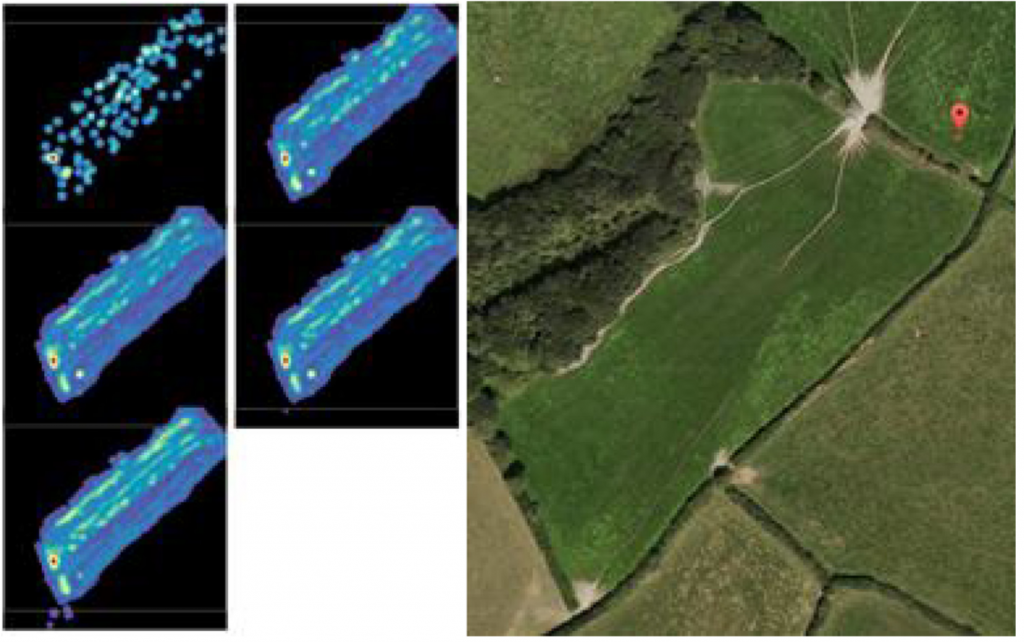

Once we capture depth images of the tubers, we normalise out the conveyor belt based on its depth (auto-calibrated by first imaging within any potatoes on the harvester), and are left with only candidate potatoes. We further clean up the image by working on the assumption the the 2D profile of a potato is ellipsoid, so objects that do not meet this prior are discarded automatically.

We then perform segmentation over the remaining objects to isolate individual potato ellipsoids.

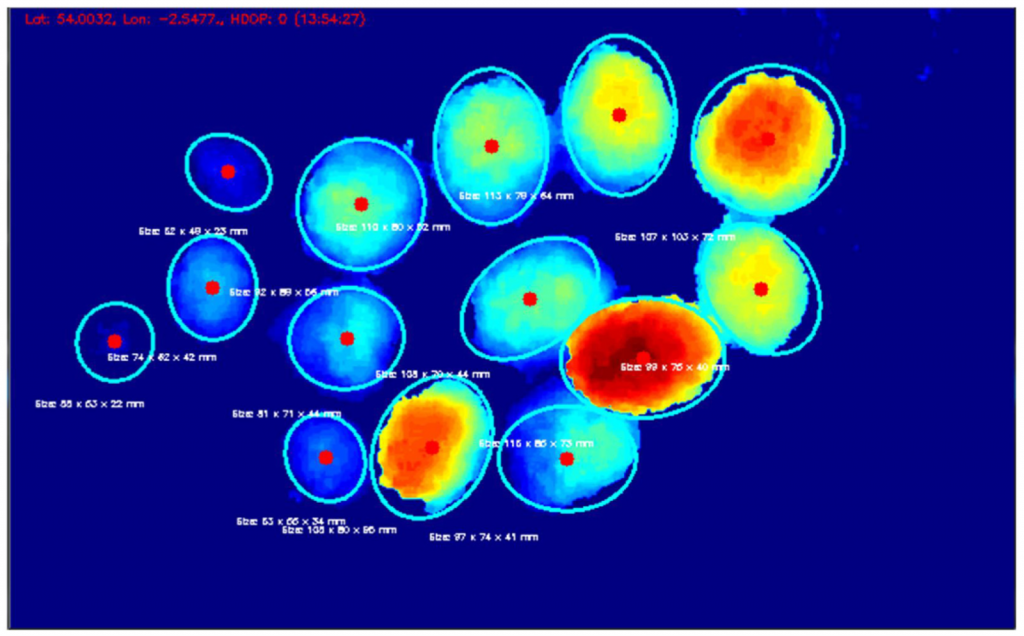

For each measured object we record the major and minor axis, along with its height (at the maximum point). In order to size-grade the potatoes, we use a grading technique devised in the Centre for Machine Vision to measure aggregate size yields [1].

The system then uses on-board GPS to register the number of potatoes detected at a given position, categorised by grade. Full results can be found in the paper, but we found the system to be accurate to within 10% of the true value, considerably more accurate than the existing estimation method.

[1] J.R.J. Lee, L.N. Smith, M.L. Smith, A mathematical morphology approach to image based 3D particle shape analysis, Mach. Vis. Appl. 16 (5) (2005).

Notes

The figures in this post are reproduced from the following paper, on which I was a co-author:

Smith, L. N., et. al. (2018). Innovative 3D and 2D machine vision methods for analysis of plants and crops in the field. Computers in Industry, 97. pp. 122-131. ISSN 0166-3615. (4th Author)

This work resulted in the following patent application, on which I was listed as a named inventor:

A Crop Monitoring System and Method (GB2558029A): Filed Jul 4, 2018 [Approved, Link]